Harvest no longer has to be the busiest time of the year. It can simply be the most profitable.

A gentle picker

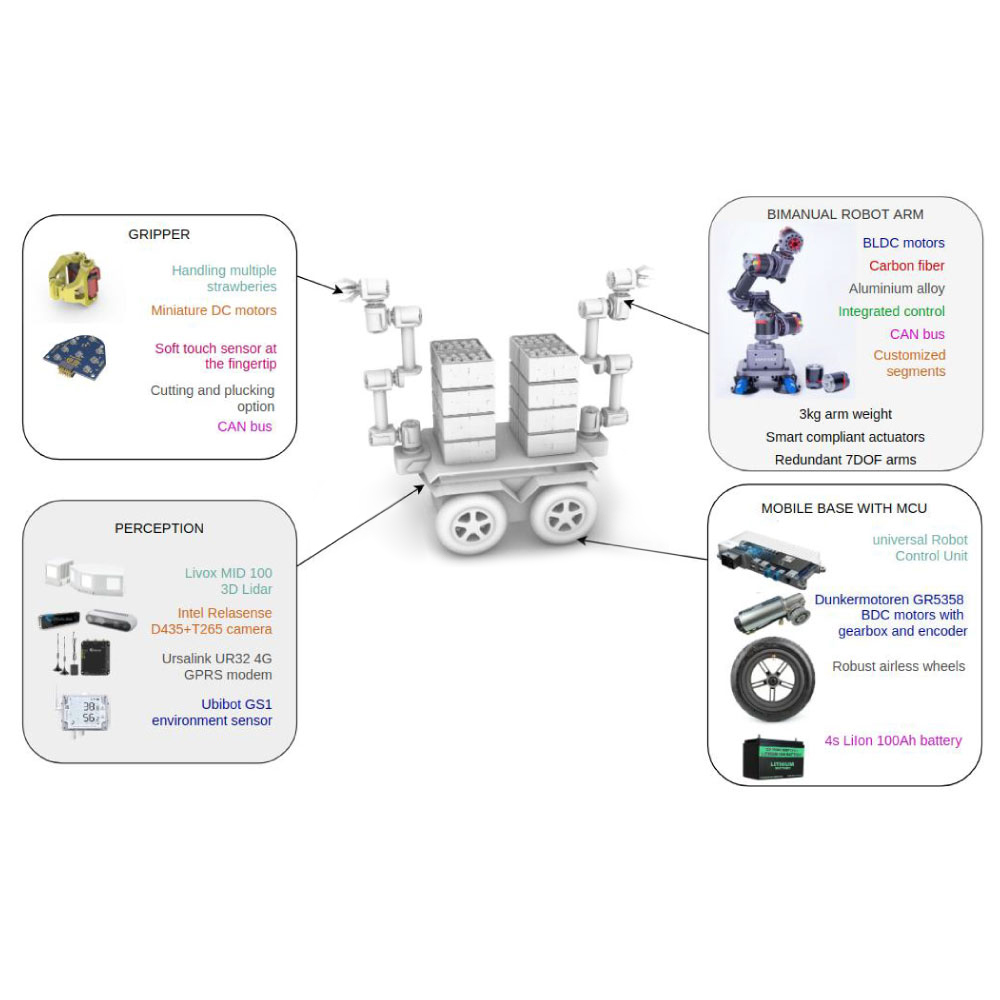

The design of bimanual arms brings human-like manipulation with multiple degrees of freedom for adaptation and flexibility in motion. That enabled Berry-bot to harvest strawberries in clusters, which is the still the unsolved challenge for agricultural robotics.

Innovative &

Resourceful

Unlike most other solutions that struggle with precision in the case of occlusion with other fruits, leaves or some other objects, Berry-bot’s bimanual arm setup overcomes this problem with coordinated control of two arms, where one arm manipulate objects that are occluding strawberries, allowing the perception system to register the hidden fruit and navigate the second arm to harvest it.

Booming

profit

By designing bimanual arms using lightweight industrial grade joint modules, instead of using existing expensive and inefficient robotic arms, we have created a perfect blend of functionality, robustness and reliability, and found a sweet spot between complexity and production costs.

Perceptive &

Durable

The team behind Berry-bot puts together a set of people with diverse backgrounds and previous experience in robot design, development and integration, vision, control and perception. Under the hood, Berry-bot is powered by two main modules: software for controlling the robot, which is built around ROS, and data acquisition with cloud-based data processing. Long autonomy of the robot in the field is provided by a high-capacity battery. It’s equipped with a very advanced perception system consisting of, two 3D Lidars, four cameras and an environment sensor.

Automation in Intralogistics

FBR-25L

Orox Spindle

Bottleneck Detector

LyraScan